Last month we looked at Artifactory, both Commercial and OSS and how it could be used to host artifacts and containers. Let’s also look at the other big artifact management suite, Sonatype Nexus and see how it compares.

While i managed to run containerized Nexus on a variety of platforms and methods, i was unable to ultimately get docker repositories exposed in the cluster with the existing stable charts.

However, for general artifact management, one may use Nexus this way just fine.

Setup

First, let’s get an LKE cluster spun to host our work similar to how we did for Artifactory. (If you do not have the linode-cli app, see “CLI Support” in our LKE post).

Before you begin, you’ll want to check if you have existing clusters. In fact, just checking on clusters informed me the CLI was out of date:

$ linode-cli lke clusters-list

The API responded with version 4.11.0, which is newer than the CLI's version of 4.9.0. Please update the CLI to get access to the newest features. You can update with a simple `pip install --upgrade linode-cli`

┌────┬───────┬────────┐

│ id │ label │ region │

└────┴───────┴────────┘

I did a quick upgrade to latest and tried again:

$ sudo pip install --upgrade linode-cli

Password:

WARNING: The directory '/Users/johnsi10/Library/Caches/pip/http' or its parent directory is not owned by the current user and the cache has been disabled. Please check the permissions and owner of that directory. If executing pip with sudo, you may want sudo's -H flag.

WARNING: The directory '/Users/johnsi10/Library/Caches/pip' or its parent directory is not owned by the current user and caching wheels has been disabled. check the permissions and owner of that directory. If executing pip with sudo, you may want sudo's -H flag.

Collecting linode-cli

Downloading https://files.pythonhosted.org/packages/6f/e3/1d2681be13aec37880e2ae200a3caaf6c82ae6de6b138973989197310097/linode_cli-2.12.0-py2.py3-none-any.whl (149kB)

|████████████████████████████████| 153kB 1.8MB/s

Requirement already satisfied, skipping upgrade: colorclass in /usr/local/lib/python3.7/site-packages (from linode-cli) (2.2.0)

Requirement already satisfied, skipping upgrade: requests in /usr/local/lib/python3.7/site-packages (from linode-cli) (2.22.0)

Requirement already satisfied, skipping upgrade: enum34 in /usr/local/lib/python3.7/site-packages (from linode-cli) (1.1.6)

Requirement already satisfied, skipping upgrade: terminaltables in /usr/local/lib/python3.7/site-packages (from linode-cli) (3.1.0)

Requirement already satisfied, skipping upgrade: PyYAML in /usr/local/lib/python3.7/site-packages (from linode-cli) (5.1.2)

Requirement already satisfied, skipping upgrade: chardet<3.1.0,>=3.0.2 in /usr/local/lib/python3.7/site-packages (from requests->linode-cli) (3.0.4)

Requirement already satisfied, skipping upgrade: certifi>=2017.4.17 in /usr/local/lib/python3.7/site-packages (from requests->linode-cli) (2019.11.28)

Requirement already satisfied, skipping upgrade: idna<2.9,>=2.5 in /usr/local/lib/python3.7/site-packages (from requests->linode-cli) (2.8)

Requirement already satisfied, skipping upgrade: urllib3!=1.25.0,!=1.25.1,<1.26,>=1.21.1 in /usr/local/lib/python3.7/site-packages (from requests->linode-cli) (1.25.7)

Installing collected packages: linode-cli

Found existing installation: linode-cli 2.10.3

Uninstalling linode-cli-2.10.3:

Successfully uninstalled linode-cli-2.10.3

Successfully installed linode-cli-2.12.0

$ linode-cli lke clusters-list

┌────┬───────┬────────┐

│ id │ label │ region │

└────┴───────┴────────┘

Next, seeing as we presently have no clusters, let’s create one.

Surprisingly, the documentation on using the linode-cli to create a cluster is still errant and/or non-existent. So hopefully this helps some folks googling for the answer:

$ linode-cli lke cluster-create --label NexusK8s --region us-central --version 1.16 --node_pools.type g6-standard-2 --node_pools.count 3

┌─────┬──────────┬────────────┐

│ id │ label │ region │

├─────┼──────────┼────────────┤

│ 829 │ NexusK8s │ us-central │

└─────┴──────────┴────────────┘

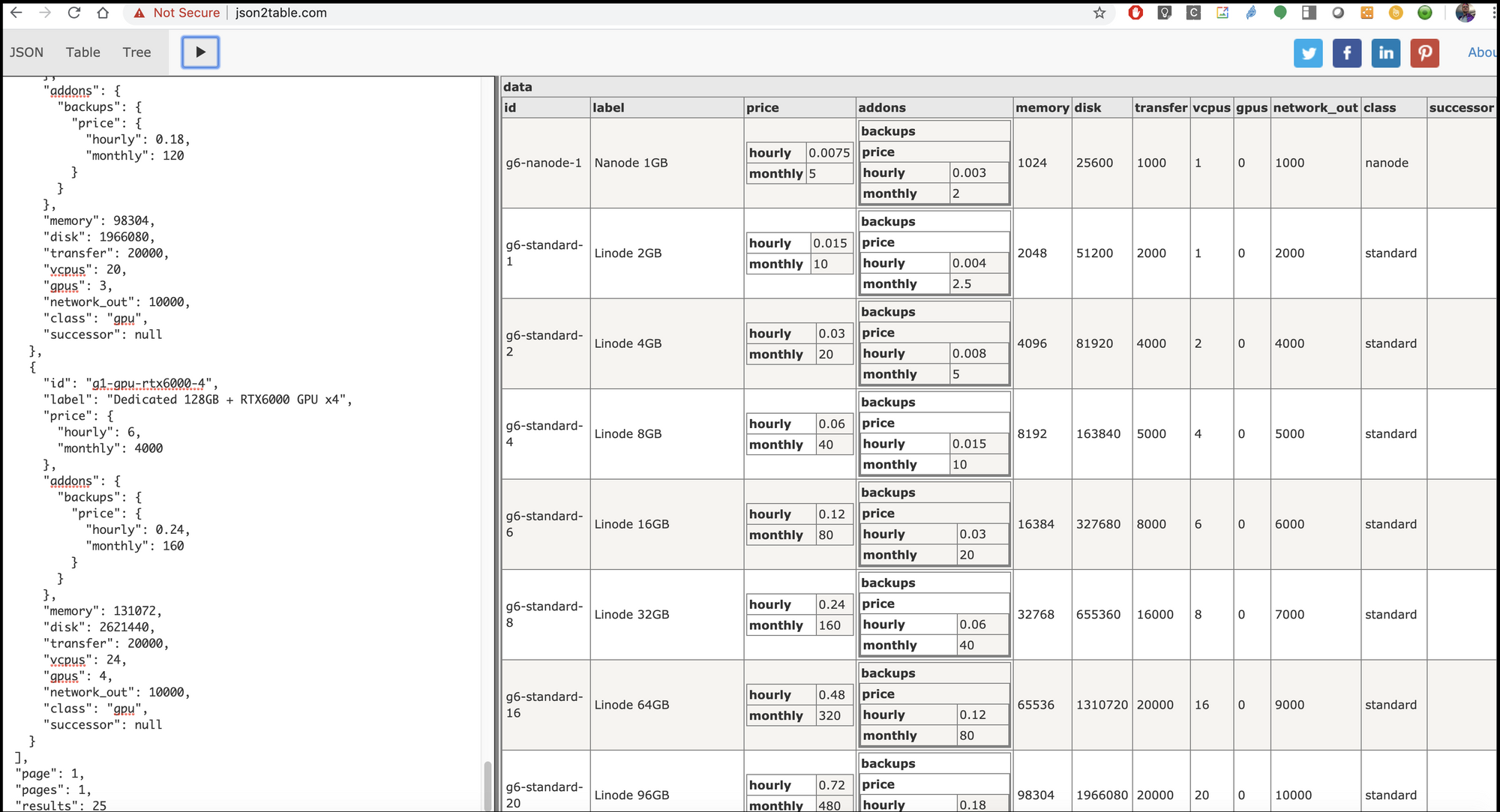

FYI: The node_pools.type can be sort out from the API call:

$ curl https://api.linode.com/v4/linode/types | jq

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 7626 100 7626 0 0 30861 0 --:--:-- --:--:-- --:--:-- 30874

{

"data": [

{

"id": "g6-nanode-1",

"label": "Nanode 1GB",

"price": {

"hourly": 0.0075,

"monthly": 5

},

"addons": {

"backups": {

"price": {

"hourly": 0.003,

"monthly": 2

}

}

},

"memory": 1024,

"disk": 25600,

"transfer": 1000,

"vcpus": 1,

"gpus": 0,

"network_out": 1000,

"class": "nanode",

"successor": null

},

{

"id": "g6-standard-1",

"label": "Linode 2GB",

"price": {

"hourly": 0.015,

"monthly": 10

},

"addons": {

"backups": {

"price": {

"hourly": 0.004,

"monthly": 2.5

}

}

},

"memory": 2048,

"disk": 51200,

"transfer": 2000,

"vcpus": 1,

"gpus": 0,

"network_out": 2000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-2",

"label": "Linode 4GB",

"price": {

"hourly": 0.03,

"monthly": 20

},

"addons": {

"backups": {

"price": {

"hourly": 0.008,

"monthly": 5

}

}

},

"memory": 4096,

"disk": 81920,

"transfer": 4000,

"vcpus": 2,

"gpus": 0,

"network_out": 4000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-4",

"label": "Linode 8GB",

"price": {

"hourly": 0.06,

"monthly": 40

},

"addons": {

"backups": {

"price": {

"hourly": 0.015,

"monthly": 10

}

}

},

"memory": 8192,

"disk": 163840,

"transfer": 5000,

"vcpus": 4,

"gpus": 0,

"network_out": 5000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-6",

"label": "Linode 16GB",

"price": {

"hourly": 0.12,

"monthly": 80

},

"addons": {

"backups": {

"price": {

"hourly": 0.03,

"monthly": 20

}

}

},

"memory": 16384,

"disk": 327680,

"transfer": 8000,

"vcpus": 6,

"gpus": 0,

"network_out": 6000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-8",

"label": "Linode 32GB",

"price": {

"hourly": 0.24,

"monthly": 160

},

"addons": {

"backups": {

"price": {

"hourly": 0.06,

"monthly": 40

}

}

},

"memory": 32768,

"disk": 655360,

"transfer": 16000,

"vcpus": 8,

"gpus": 0,

"network_out": 7000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-16",

"label": "Linode 64GB",

"price": {

"hourly": 0.48,

"monthly": 320

},

"addons": {

"backups": {

"price": {

"hourly": 0.12,

"monthly": 80

}

}

},

"memory": 65536,

"disk": 1310720,

"transfer": 20000,

"vcpus": 16,

"gpus": 0,

"network_out": 9000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-20",

"label": "Linode 96GB",

"price": {

"hourly": 0.72,

"monthly": 480

},

"addons": {

"backups": {

"price": {

"hourly": 0.18,

"monthly": 120

}

}

},

"memory": 98304,

"disk": 1966080,

"transfer": 20000,

"vcpus": 20,

"gpus": 0,

"network_out": 10000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-24",

"label": "Linode 128GB",

"price": {

"hourly": 0.96,

"monthly": 640

},

"addons": {

"backups": {

"price": {

"hourly": 0.24,

"monthly": 160

}

}

},

"memory": 131072,

"disk": 2621440,

"transfer": 20000,

"vcpus": 24,

"gpus": 0,

"network_out": 11000,

"class": "standard",

"successor": null

},

{

"id": "g6-standard-32",

"label": "Linode 192GB",

"price": {

"hourly": 1.44,

"monthly": 960

},

"addons": {

"backups": {

"price": {

"hourly": 0.36,

"monthly": 240

}

}

},

"memory": 196608,

"disk": 3932160,

"transfer": 20000,

"vcpus": 32,

"gpus": 0,

"network_out": 12000,

"class": "standard",

"successor": null

},

{

"id": "g6-highmem-1",

"label": "Linode 24GB",

"price": {

"hourly": 0.09,

"monthly": 60

},

"addons": {

"backups": {

"price": {

"hourly": 0.0075,

"monthly": 5

}

}

},

"memory": 24576,

"disk": 20480,

"transfer": 5000,

"vcpus": 1,

"gpus": 0,

"network_out": 5000,

"class": "highmem",

"successor": null

},

{

"id": "g6-highmem-2",

"label": "Linode 48GB",

"price": {

"hourly": 0.18,

"monthly": 120

},

"addons": {

"backups": {

"price": {

"hourly": 0.015,

"monthly": 10

}

}

},

"memory": 49152,

"disk": 40960,

"transfer": 6000,

"vcpus": 2,

"gpus": 0,

"network_out": 6000,

"class": "highmem",

"successor": null

},

{

"id": "g6-highmem-4",

"label": "Linode 90GB",

"price": {

"hourly": 0.36,

"monthly": 240

},

"addons": {

"backups": {

"price": {

"hourly": 0.03,

"monthly": 20

}

}

},

"memory": 92160,

"disk": 92160,

"transfer": 7000,

"vcpus": 4,

"gpus": 0,

"network_out": 7000,

"class": "highmem",

"successor": null

},

{

"id": "g6-highmem-8",

"label": "Linode 150GB",

"price": {

"hourly": 0.72,

"monthly": 480

},

"addons": {

"backups": {

"price": {

"hourly": 0.06,

"monthly": 40

}

}

},

"memory": 153600,

"disk": 204800,

"transfer": 8000,

"vcpus": 8,

"gpus": 0,

"network_out": 8000,

"class": "highmem",

"successor": null

},

{

"id": "g6-highmem-16",

"label": "Linode 300GB",

"price": {

"hourly": 1.44,

"monthly": 960

},

"addons": {

"backups": {

"price": {

"hourly": 0.12,

"monthly": 80

}

}

},

"memory": 307200,

"disk": 348160,

"transfer": 9000,

"vcpus": 16,

"gpus": 0,

"network_out": 9000,

"class": "highmem",

"successor": null

},

{

"id": "g6-dedicated-2",

"label": "Dedicated 4GB",

"price": {

"hourly": 0.045,

"monthly": 30

},

"addons": {

"backups": {

"price": {

"hourly": 0.008,

"monthly": 5

}

}

},

"memory": 4096,

"disk": 81920,

"transfer": 4000,

"vcpus": 2,

"gpus": 0,

"network_out": 4000,

"class": "dedicated",

"successor": null

},

{

"id": "g6-dedicated-4",

"label": "Dedicated 8GB",

"price": {

"hourly": 0.09,

"monthly": 60

},

"addons": {

"backups": {

"price": {

"hourly": 0.015,

"monthly": 10

}

}

},

"memory": 8192,

"disk": 163840,

"transfer": 5000,

"vcpus": 4,

"gpus": 0,

"network_out": 5000,

"class": "dedicated",

"successor": null

},

{

"id": "g6-dedicated-8",

"label": "Dedicated 16GB",

"price": {

"hourly": 0.18,

"monthly": 120

},

"addons": {

"backups": {

"price": {

"hourly": 0.03,

"monthly": 20

}

}

},

"memory": 16384,

"disk": 327680,

"transfer": 6000,

"vcpus": 8,

"gpus": 0,

"network_out": 6000,

"class": "dedicated",

"successor": null

},

{

"id": "g6-dedicated-16",

"label": "Dedicated 32GB",

"price": {

"hourly": 0.36,

"monthly": 240

},

"addons": {

"backups": {

"price": {

"hourly": 0.06,

"monthly": 40

}

}

},

"memory": 32768,

"disk": 655360,

"transfer": 7000,

"vcpus": 16,

"gpus": 0,

"network_out": 7000,

"class": "dedicated",

"successor": null

},

{

"id": "g6-dedicated-32",

"label": "Dedicated 64GB",

"price": {

"hourly": 0.72,

"monthly": 480

},

"addons": {

"backups": {

"price": {

"hourly": 0.12,

"monthly": 80

}

}

},

"memory": 65536,

"disk": 1310720,

"transfer": 8000,

"vcpus": 32,

"gpus": 0,

"network_out": 8000,

"class": "dedicated",

"successor": null

},

{

"id": "g6-dedicated-48",

"label": "Dedicated 96GB",

"price": {

"hourly": 1.08,

"monthly": 720

},

"addons": {

"backups": {

"price": {

"hourly": 0.18,

"monthly": 120

}

}

},

"memory": 98304,

"disk": 1966080,

"transfer": 9000,

"vcpus": 48,

"gpus": 0,

"network_out": 9000,

"class": "dedicated",

"successor": null

},

{

"id": "g1-gpu-rtx6000-1",

"label": "Dedicated 32GB + RTX6000 GPU x1",

"price": {

"hourly": 1.5,

"monthly": 1000

},

"addons": {

"backups": {

"price": {

"hourly": 0.06,

"monthly": 40

}

}

},

"memory": 32768,

"disk": 655360,

"transfer": 16000,

"vcpus": 8,

"gpus": 1,

"network_out": 10000,

"class": "gpu",

"successor": null

},

{

"id": "g1-gpu-rtx6000-2",

"label": "Dedicated 64GB + RTX6000 GPU x2",

"price": {

"hourly": 3,

"monthly": 2000

},

"addons": {

"backups": {

"price": {

"hourly": 0.12,

"monthly": 80

}

}

},

"memory": 65536,

"disk": 1310720,

"transfer": 20000,

"vcpus": 16,

"gpus": 2,

"network_out": 10000,

"class": "gpu",

"successor": null

},

{

"id": "g1-gpu-rtx6000-3",

"label": "Dedicated 96GB + RTX6000 GPU x3",

"price": {

"hourly": 4.5,

"monthly": 3000

},

"addons": {

"backups": {

"price": {

"hourly": 0.18,

"monthly": 120

}

}

},

"memory": 98304,

"disk": 1966080,

"transfer": 20000,

"vcpus": 20,

"gpus": 3,

"network_out": 10000,

"class": "gpu",

"successor": null

},

{

"id": "g1-gpu-rtx6000-4",

"label": "Dedicated 128GB + RTX6000 GPU x4",

"price": {

"hourly": 6,

"monthly": 4000

},

"addons": {

"backups": {

"price": {

"hourly": 0.24,

"monthly": 160

}

}

},

"memory": 131072,

"disk": 2621440,

"transfer": 20000,

"vcpus": 24,

"gpus": 4,

"network_out": 10000,

"class": "gpu",

"successor": null

}

],

"page": 1,

"pages": 1,

"results": 25

}

Pro-tip: you can use something like json2table.com to view it in an easier to read tabular format:

We can now download the config and test it.

$ linode-cli lke clusters-list

┌─────┬──────────┬────────────┐

│ id │ label │ region │

├─────┼──────────┼────────────┤

│ 825 │ NexusK8s │ us-central │

└─────┴──────────┴────────────┘

$ linode-cli lke kubeconfig-view 829 | tail -n2 | head -n1 | sed 's/.\{8\}$//' | sed 's/^.\{8\}//' | base64 --decode > ~/.kube/config

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

lke829-1021-5e148175dbe5 Ready <none> 12m v1.16.2

lke829-1021-5e148175e213 Ready <none> 12m v1.16.2

lke829-1021-5e148175e753 Ready <none> 12m v1.16.2

Pro-tip: Installing the linode-cli.. While there does exist a “CLI” you can install with apt-get install linode-cli, it’s just an older CLI “linode” for managing some core features. To install the linode-cli we use below, first ensure you have PIP installed (sudo apt-get install python-pip) and then install with pip (sudo pip install linode-cli). You can also download and build from source (github).

Set up Helm

As you recall, Helm/Tiller 2.x doesn’t work out of the box with K8s 1.16, so we have to install manually.

First, set up the RBAC roles

$ cat ~/Documents/rbac-config.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: tiller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: tiller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: tiller

namespace: kube-system

$ kubectl create -f ~/Documents/rbac-config.yaml

serviceaccount/tiller created

clusterrolebinding.rbac.authorization.k8s.io/tiller created

Then install tiller with the proper role:

$ cat helm_init.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: helm

name: tiller

name: tiller-deploy

namespace: kube-system

spec:

replicas: 1

strategy: {}

selector:

matchLabels:

app: helm

name: tiller

template:

metadata:

creationTimestamp: null

labels:

app: helm

name: tiller

spec:

automountServiceAccountToken: true

containers:

- env:

- name: TILLER_NAMESPACE

value: kube-system

- name: TILLER_HISTORY_MAX

value: "200"

image: gcr.io/kubernetes-helm/tiller:v2.14.3

imagePullPolicy: IfNotPresent

livenessProbe:

httpGet:

path: /liveness

port: 44135

initialDelaySeconds: 1

timeoutSeconds: 1

name: tiller

ports:

- containerPort: 44134

name: tiller

- containerPort: 44135

name: http

readinessProbe:

httpGet:

path: /readiness

port: 44135

initialDelaySeconds: 1

timeoutSeconds: 1

resources: {}

serviceAccountName: tiller

status: {}

---

apiVersion: v1

kind: Service

metadata:

creationTimestamp: null

labels:

app: helm

name: tiller

name: tiller-deploy

namespace: kube-system

spec:

ports:

- name: tiller

port: 44134

targetPort: tiller

selector:

app: helm

name: tiller

type: ClusterIP

status:

loadBalancer: {}

$ kubectl apply -f ~/Documents/helm_init.yaml

deployment.apps/tiller-deploy created

service/tiller-deploy created

You will see the tiller version in the file above is hardcoded 2.14.3. You can change that based on your local helm. But realize that helm 3 doesnt use tiller.

Verify things are running:

$ kubectl get pods --all-namespaces | grep tiller

kube-system tiller-deploy-86475f6c77-rqtkv 1/1 Running 0 36s

$ helm version

Client: &version.Version{SemVer:"v2.14.3", GitCommit:"0e7f3b6637f7af8fcfddb3d2941fcc7cbebb0085", GitTreeState:"clean"}

Server: &version.Version{SemVer:"v2.14.3", GitCommit:"0e7f3b6637f7af8fcfddb3d2941fcc7cbebb0085", GitTreeState:"clean"}

Setup ACR with an Image

Let's take a quick moment to create a container images somewhere we can proxy later. You can absolutely use docker hub for this (https://hub.docker.com/) . However, I'm far more comfortable testing with an ACR i can easily destroy later.

Once created, we’ll need the admin user for pushing/pulling from Nexus:

We should be now able to use this repo with:

Url: https://lkedemocr.azurecr.io

User: lkedemocr

Pass: h2gCMnqTgh67dpX3ZqJ0OwgKdN=cYAL5Verify we can reach this repo:

$ az acr login --name lkedemocr

Unable to get AAD authorization tokens with message: An error occurred: CONNECTIVITY_REFRESH_TOKEN_ERROR

Access to registry 'lkedemocr.azurecr.io' was denied. Response code: 401. Please try running 'az login' again to refresh permissions.

Unable to get admin user credentials with message: The resource with name 'lkedemocr' and type 'Microsoft.ContainerRegistry/registries' could not be found in subscription 'xxxxxxxx’'.

Username: lkedemocr

Password:

Login Succeeded

Note: On a second pass, i couldn't login this way and needed to do “az login” first.

$ docker images | head -n5

REPOSITORY TAG IMAGE ID CREATED SIZE

freeboard latest 3162f871ef95 2 weeks ago 928MB

freeboard1 latest ff169793756b 2 weeks ago 928MB

uscdaksdev5cr.azurecr.io/skaffold-project/skaffold-example v1.0.0-21-g4dd6b59e-dirty b85e9ef75d32 2 weeks ago 7.55MB

gcr.io/api-project-799042244963/skaffold-example v1.0.0-21-g4dd6b59e-dirty b85e9ef75d32 2 weeks ago 7.55MB

$ docker tag freeboard:latest lkedemocr.azurecr.io/freeboard:testing

$ docker push lkedemocr.azurecr.io/freeboard:testing

The push refers to repository [lkedemocr.azurecr.io/freeboard]

b9be68ae8c02: Pushed

7c4e4e0f1ff3: Pushed

a0bbb150aa4a: Pushing [================================================> ] 17.18MB/17.77MB

16e71426ee51: Pushing [==================================================>] 19.24MB

Set up Containerized Nexus OSS

We need to adjust the values on the helm chart to get enable a port-forward locally:

$ cat ~/Downloads/values_01.yaml

statefulset:

enabled: false

replicaCount: 1

# By default deploymentStrategy is set to rollingUpdate with maxSurge of 25% and maxUnavailable of 25% . you can change type to `Recreate` or can uncomment `rollingUpdate` specification and adjust them to your usage.

deploymentStrategy: {}

# rollingUpdate:

# maxSurge: 25%

# maxUnavailable: 25%

# type: RollingUpdate

nexus:

imageName: quay.io/travelaudience/docker-nexus

imageTag: 3.17.0

imagePullPolicy: IfNotPresent

env:

- name: install4jAddVmParams

value: "-Xms1200M -Xmx1200M -XX:MaxDirectMemorySize=2G -XX:+UnlockExperimentalVMOptions -XX:+UseCGroupMemoryLimitForHeap"

# nodeSelector:

# cloud.google.com/gke-nodepool: default-pool

resources: {}

# requests:

## Based on https://support.sonatype.com/hc/en-us/articles/115006448847#mem

## and https://twitter.com/analytically/status/894592422382063616:

## Xms == Xmx

## Xmx <= 4G

## MaxDirectMemory >= 2G

## Xmx + MaxDirectMemory <= RAM * 2/3 (hence the request for 4800Mi)

## MaxRAMFraction=1 is not being set as it would allow the heap

## to use all the available memory.

# cpu: 250m

# memory: 4800Mi

# The ports should only be changed if the nexus image uses a different port

dockerPort: 5003

nexusPort: 8081

service:

type: NodePort

# clusterIP: None

# annotations: {}

## When using LoadBalancer service type, use the following AWS certificate from ACM

## https://aws.amazon.com/documentation/acm/

# service.beta.kubernetes.io/aws-load-balancer-ssl-cert: "arn:aws:acm:eu-west-1:123456789:certificate/abc123-abc123-abc123-abc123"

# service.beta.kubernetes.io/aws-load-balancer-backend-protocol: "https"

# service.beta.kubernetes.io/aws-load-balancer-backend-port: "https"

## When using LoadBalancer service type, whitelist these source IP ranges

## https://kubernetes.io/docs/tasks/access-application-cluster/configure-cloud-provider-firewall/

# loadBalancerSourceRanges:

# - 192.168.1.10/32

# labels: {}

# securityContext:

# fsGroup: 2000

podAnnotations: {}

livenessProbe:

initialDelaySeconds: 30

periodSeconds: 30

failureThreshold: 6

# timeoutSeconds: 10

path: /

readinessProbe:

initialDelaySeconds: 30

periodSeconds: 30

failureThreshold: 6

# timeoutSeconds: 10

path: /

# hostAliases allows the modification of the hosts file inside a container

hostAliases: []

# - ip: "192.168.1.10"

# hostnames:

# - "example.com"

# - "www.example.com"

route:

enabled: false

name: docker

portName: docker

labels:

annotations:

# path: /docker

nexusProxy:

enabled: true

# svcName: proxy-svc

imageName: quay.io/travelaudience/docker-nexus-proxy

imageTag: 2.5.0

imagePullPolicy: IfNotPresent

port: 8080

targetPort: 8080

# labels: {}

env:

nexusDockerHost: 127.0.0.1

nexusHttpHost: 127.0.0.1

enforceHttps: false

cloudIamAuthEnabled: false

## If cloudIamAuthEnabled is set to true uncomment the variables below and remove this line

# clientId: ""

# clientSecret: ""

# organizationId: ""

# redirectUrl: ""

# requiredMembershipVerification: "true"

# secrets:

# keystore: ""

# password: ""

resources: {}

# requests:

# cpu: 100m

# memory: 256Mi

# limits:

# cpu: 200m

# memory: 512Mi

nexusProxyRoute:

enabled: false

labels:

annotations:

# path: /nexus

persistence:

enabled: true

accessMode: ReadWriteOnce

## If defined, storageClass: <storageClass>

## If set to "-", storageClass: "", which disables dynamic provisioning

## If undefined (the default) or set to null, no storageClass spec is

## set, choosing the default provisioner. (gp2 on AWS, standard on

## GKE, AWS & OpenStack)

##

# existingClaim:

# annotations:

# "helm.sh/resource-policy": keep

# storageClass: "-"

storageSize: 8Gi

# If PersistentDisk already exists you can create a PV for it by including the 2 following keypairs.

# pdName: nexus-data-disk

# fsType: ext4

nexusBackup:

enabled: false

imageName: quay.io/travelaudience/docker-nexus-backup

imageTag: 1.5.0

imagePullPolicy: IfNotPresent

env:

targetBucket:

nexusAdminPassword: "admin123"

persistence:

enabled: true

# existingClaim:

# annotations:

# "helm.sh/resource-policy": keep

accessMode: ReadWriteOnce

# See comment above for information on setting the backup storageClass

# storageClass: "-"

storageSize: 8Gi

# If PersistentDisk already exists you can create a PV for it by including the 2 following keypairs.

# pdName: nexus-backup-disk

# fsType: ext4

ingress:

enabled: false

path: /

annotations: {}

# # NOTE: Can't use 'false' due to https://github.com/jetstack/kube-lego/issues/173.

# kubernetes.io/ingress.allow-http: true

# kubernetes.io/ingress.class: gce

# kubernetes.io/ingress.global-static-ip-name: ""

# kubernetes.io/tls-acme: true

tls:

enabled: true

secretName: nexus-tls

# Specify custom rules in addition to or instead of the nexus-proxy rules

rules:

# - host: http://nexus.127.0.0.1.nip.io

# http:

# paths:

# - backend:

# serviceName: additional-svc

# servicePort: 80

tolerations: []

# # Enable configmap and add data in configmap

config:

enabled: false

mountPath: /sonatype-nexus-conf

data:

deployment:

# # Add annotations in deployment to enhance deployment configurations

annotations: {}

# # Add init containers. e.g. to be used to give specific permissions for nexus-data.

# # Add your own init container or uncomment and modify the given example.

initContainers:

# - name: fmp-volume-permission

# image: busybox

# imagePullPolicy: IfNotPresent

# command: ['chown','-R', '200', '/nexus-data']

# volumeMounts:

# - name: nexus-data

# mountPath: /nexus-data

# # Uncomment and modify this to run a command after starting the nexus container.

postStart:

command: # '["/bin/sh", "-c", "ls"]'

additionalContainers:

additionalVolumes:

additionalVolumeMounts:

# # To use an additional secret, set enable to true and add data

secret:

enabled: false

mountPath: /etc/secret-volume

readOnly: true

data:

# # To use an additional service, set enable to true

service:

# name: additional-svc

enabled: false

labels: {}

annotations: {}

ports:

- name: nexus-service

targetPort: 80

port: 80

There is a lot there, so the parts that are important are the port and telling Nexus to accept calls from 127.0.0.1:

port: 8080

targetPort: 8080

# labels: {}

env:

nexusDockerHost: 127.0.0.1

nexusHttpHost: 127.0.0.1We can then launch the helm chart:

$ helm install stable/sonatype-nexus -f ~/Downloads/values_01.yaml

NAME: dusty-hamster

LAST DEPLOYED: Tue Jan 7 07:20:44 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

dusty-hamster-sonatype-nexus 0/1 0 0 0s

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

dusty-hamster-sonatype-nexus-data Pending linode-block-storage 0s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

dusty-hamster-sonatype-nexus-75c5866fb-8gszt 0/2 Pending 0 0s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dusty-hamster-sonatype-nexus NodePort 10.128.145.15 <none> 8080:31617/TCP 0s

NOTES:

1. To access Nexus:

NOTE: It may take a few minutes for the ingress load balancer to become available or the backends to become HEALTHY.

You can watch the status of the backends by running:

`kubectl get ingress -o jsonpath='{.items[*].metadata.annotations.ingress\.kubernetes\.io/backends}'`

To access Nexus you can check:

http://127.0.0.1

2. Login with the following credentials

username: admin

password: admin123

3. Change Your password after the first login

4. Next steps in configuration

Please follow the link below to the README for nexus configuration, usage, backups and DR info:

https://github.com/helm/charts/tree/master/stable/sonatype-nexus#after-installing-the-chart

Now you may thing the user/pass is admin/admin123. I mean, the chart just said that. But it's not true. You'll need to get the password from the pod.

$ kubectl get pods --all-namespaces | grep nexus

default dusty-hamster-sonatype-nexus-75c5866fb-8gszt 1/2 Running 0 62s

$ kubectl get pods --all-namespaces | grep nexus

default dusty-hamster-sonatype-nexus-75c5866fb-8gszt 2/2 Running 0 7m53s

Pro-Tip: Wait a bit if you get an error on the below command. The pod has several containers and you may get a “No such file or directory” if you do this too soon.

$ kubectl exec dusty-hamster-sonatype-nexus-75c5866fb-8gszt cat /nexus-data/admin.password

Defaulting container name to nexus.

Use 'kubectl describe pod/dusty-hamster-sonatype-nexus-75c5866fb-8gszt -n default' to see all of the containers in this pod.

ea8bfebe-2d10-4fd2-82e2-fe6ec1b5b9f9

With port forward, we can log in with admin/ea8bfebe-2d10-4fd2-82e2-fe6ec1b5b9f9 (the password from above)

$ kubectl port-forward dusty-hamster-sonatype-nexus-75c5866fb-8gszt 8080:8080

Forwarding from 127.0.0.1:8080 -> 8080

Forwarding from [::1]:8080 -> 8080

Create a repository to proxy the ACR:

Then choose docker (proxy) for type:

Set a name and URL that matches ACR. Check the Use certificates stored in Nexus truststore. We then will click “View Certificate” to add to our trust store.

It will show the azurecr.io cert, click add to trust store and you’ll get a notice:

Lastly, choose http auth and enter the details from ACR:

Once we click create, we should see the proxy listed in the list:

As it stands, it will be empty:

However, after trying many things (including setting insecure repos to 127.0.0.1:5003, 8080 and other ports). Port-forwarding many ways, the fact that the pod just exposes 8080 via the service and the chart doesn’t seem to allow overrides, creates a challenge to actually using this containerized Nexus to host docker images. (Other users seem to have encountered this, see this abandoned GH issue).

We may have success with interpod communication....

$ kubectl run my-shell --rm -i --tty --image ubuntu -- bash

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

If you don't see a command prompt, try pressing enter.

root@my-shell-7d5c87b77c-28g5w:/# docker -v

bash: docker: command not found

root@my-shell-7d5c87b77c-28g5w:/# apt update

Get:1 http://archive.ubuntu.com/ubuntu bionic InRelease [242 kB]

Get:2 http://security.ubuntu.com/ubuntu bionic-security InRelease [88.7 kB]

...snip...

root@my-shell-7d5c87b77c-28g5w:/# apt install docker.io

Reading package lists... Done

Building dependency tree

Reading state information... Done

The following additional packages will be installed:

apparmor bridge-utils ca-certificates cgroupfs-mount containerd dbus dmsetup dns-root-data dnsmasq-base file git git-man iproute2 iptables krb5-locales

less libapparmor1 libasn1-8-heimdal libatm1 libcurl3-gnutls libdbus-1-3 libdevmapper1.02.1 libedit2 libelf1 liberror-perl libexpat1 libgdbm-compat4

libgdbm5 libgssapi-krb5-2 libgssapi3-heimdal libhcrypto4-heimdal libheimbase1-heimdal libheimntlm0-heimdal libhx509-5-heimdal libidn11 libip4tc0 libip6tc0

libiptc0 libk5crypto3 libkeyutils1 libkrb5-26-heimdal libkrb5-3 libkrb5support0 libldap-2.4-2 libldap-common libmagic-mgc libmagic1 libmnl0 libmpdec2

libnetfilter-conntrack3 libnfnetlink0 libnghttp2-14 libperl5.26 libpsl5 libpython3-stdlib libpython3.6-minimal libpython3.6-stdlib libreadline7

libroken18-heimdal librtmp1 libsasl2-2 libsasl2-modules libsasl2-modules-db libsqlite3-0 libssl1.0.0 libssl1.1 libwind0-heimdal libxext6 libxmuu1

libxtables12 mime-support netbase netcat netcat-traditional openssh-client openssl patch perl perl-modules-5.26 pigz publicsuffix python3 python3-minimal

python3.6 python3.6-minimal readline-common runc ubuntu-fan xauth xz-utils

Suggested packages:

apparmor-profiles-extra apparmor-utils ifupdown default-dbus-session-bus | dbus-session-bus aufs-tools btrfs-progs debootstrap docker-doc rinse zfs-fuse

| zfsutils gettext-base git-daemon-run | git-daemon-sysvinit git-doc git-el git-email git-gui gitk gitweb git-cvs git-mediawiki git-svn iproute2-doc kmod

gdbm-l10n krb5-doc krb5-user libsasl2-modules-gssapi-mit | libsasl2-modules-gssapi-heimdal libsasl2-modules-ldap libsasl2-modules-otp libsasl2-modules-sql

keychain libpam-ssh monkeysphere ssh-askpass ed diffutils-doc perl-doc libterm-readline-gnu-perl | libterm-readline-perl-perl make python3-doc python3-tk

python3-venv python3.6-venv python3.6-doc binutils binfmt-support readline-doc

The following NEW packages will be installed:

apparmor bridge-utils ca-certificates cgroupfs-mount containerd dbus dmsetup dns-root-data dnsmasq-base docker.io file git git-man iproute2 iptables

krb5-locales less libapparmor1 libasn1-8-heimdal libatm1 libcurl3-gnutls libdbus-1-3 libdevmapper1.02.1 libedit2 libelf1 liberror-perl libexpat1

libgdbm-compat4 libgdbm5 libgssapi-krb5-2 libgssapi3-heimdal libhcrypto4-heimdal libheimbase1-heimdal libheimntlm0-heimdal libhx509-5-heimdal libidn11

libip4tc0 libip6tc0 libiptc0 libk5crypto3 libkeyutils1 libkrb5-26-heimdal libkrb5-3 libkrb5support0 libldap-2.4-2 libldap-common libmagic-mgc libmagic1

libmnl0 libmpdec2 libnetfilter-conntrack3 libnfnetlink0 libnghttp2-14 libperl5.26 libpsl5 libpython3-stdlib libpython3.6-minimal libpython3.6-stdlib

libreadline7 libroken18-heimdal librtmp1 libsasl2-2 libsasl2-modules libsasl2-modules-db libsqlite3-0 libssl1.0.0 libssl1.1 libwind0-heimdal libxext6

libxmuu1 libxtables12 mime-support netbase netcat netcat-traditional openssh-client openssl patch perl perl-modules-5.26 pigz publicsuffix python3

python3-minimal python3.6 python3.6-minimal readline-common runc ubuntu-fan xauth xz-utils

0 upgraded, 91 newly installed, 0 to remove and 1 not upgraded.

Need to get 77.7 MB of archives.

After this operation, 394 MB of additional disk space will be used.

Do you want to continue? [Y/n] Y

Get:1 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libssl1.1 amd64 1.1.1-1ubuntu2.1~18.04.5 [1300 kB]

Get:2 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libpython3.6-minimal amd64 3.6.9-1~18.04 [533 kB]

...

Updating certificates in /etc/ssl/certs...

0 added, 0 removed; done.

Running hooks in /etc/ca-certificates/update.d...

done.

root@my-shell-7d5c87b77c-28g5w:/#

root@my-shell-7d5c87b77c-28g5w:/# docker -v

Docker version 18.09.7, build 2d0083d

we can verify it works by logging into ACR

root@my-shell-7d5c87b77c-28g5w:/# docker login lkedemocr.azurecr.io

Username: lkedemocr

Password:

WARNING! Your password will be stored unencrypted in /root/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

However, no method i tried worked to login to the local server, either via podIP or svc name.

I tried using daemon and sysconfig. But in ubuntu, docker isnt really running as a service;

$ kubectl describe pod mollified-eel-sonatype-nexus | grep IP:

Annotations: cni.projectcalico.org/podIP: 10.2.2.2/32

IP: 10.2.2.2

# /etc/docker/daemon.json

DOCKER_OPTS="--insecure-registry=http://10.2.2.2:5003 --insecure-registry=http://10.2.2.2"

(and)

DOCKER_OPTS="--insecure-registry=10.2.2.2:5003 --insecure-registry=10.2.2.2"

# cat /etc/docker/daemon.conf

{

"insecure-registries" : [ "10.2.2.2:5003" ]

}

However, nothing i do seems to stick for http.

# docker login http://10.2.2.2:5003

Username: admin

Password:

INFO[0003] Error logging in to v2 endpoint, trying next endpoint: Get https://10.2.2.2:5003/v2/: http: server gave HTTP response to HTTPS client

INFO[0004] Error logging in to v1 endpoint, trying next endpoint: Get https://10.2.2.2:5003/v1/users/: http: server gave HTTP response to HTTPS client

Get https://10.2.2.2:5003/v1/users/: http: server gave HTTP response to HTTPS client

and despite setting 5004 for HTTPS, it doesnt seem to work.

Exposing via Public IP:

But chances are you want to route traffic from a public IP. We can create a simple nginx ingress controller to direct traffic

$ kubectl create namespace ingress-basic

namespace/ingress-basic created

$ helm repo add stable https://kubernetes-charts.storage.googleapis.com/

"stable" has been added to your repositories

$ helm install stable/nginx-ingress --namespace ingress-basic --set controller.replicaCount=2 --set controller.nodeSelector."beta\.kubernetes\.io/os"=linux --set defaultBackend.nodeSelector."beta\.kubernetes\.io/os"=linux

NAME: giggly-macaw

LAST DEPLOYED: Sat Jan 11 23:04:28 2020

NAMESPACE: ingress-basic

STATUS: DEPLOYED

RESOURCES:

==> v1/ClusterRole

NAME AGE

giggly-macaw-nginx-ingress 2s

==> v1/ClusterRoleBinding

NAME AGE

giggly-macaw-nginx-ingress 2s

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

giggly-macaw-nginx-ingress-controller 0/2 2 0 1s

giggly-macaw-nginx-ingress-default-backend 0/1 1 0 1s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

giggly-macaw-nginx-ingress-controller-78774bd85b-knw8x 0/1 ContainerCreating 0 1s

giggly-macaw-nginx-ingress-controller-78774bd85b-pn54t 0/1 ContainerCreating 0 1s

giggly-macaw-nginx-ingress-default-backend-5fbcbb94b7-d96j2 0/1 ContainerCreating 0 1s

==> v1/Role

NAME AGE

giggly-macaw-nginx-ingress 1s

==> v1/RoleBinding

NAME AGE

giggly-macaw-nginx-ingress 1s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

giggly-macaw-nginx-ingress-controller LoadBalancer 10.128.202.131 <pending> 80:30746/TCP,443:30869/TCP 1s

giggly-macaw-nginx-ingress-default-backend ClusterIP 10.128.115.170 <none> 80/TCP 1s

==> v1/ServiceAccount

NAME SECRETS AGE

giggly-macaw-nginx-ingress 1 2s

giggly-macaw-nginx-ingress-backend 1 2s

==> v1beta1/PodDisruptionBudget

NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE

giggly-macaw-nginx-ingress-controller 1 N/A 0 2s

NOTES:

The nginx-ingress controller has been installed.

It may take a few minutes for the LoadBalancer IP to be available.

You can watch the status by running 'kubectl --namespace ingress-basic get services -o wide -w giggly-macaw-nginx-ingress-controller'

An example Ingress that makes use of the controller:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

name: example

namespace: foo

spec:

rules:

- host: www.example.com

http:

paths:

- backend:

serviceName: exampleService

servicePort: 80

path: /

# This section is only required if TLS is to be enabled for the Ingress

tls:

- hosts:

- www.example.com

secretName: example-tls

If TLS is enabled for the Ingress, a Secret containing the certificate and key must also be provided:

apiVersion: v1

kind: Secret

metadata:

name: example-tls

namespace: foo

data:

tls.crt: <base64 encoded cert>

tls.key: <base64 encoded key>

type: kubernetes.io/tls

You can get External IP:

$ kubectl get svc -n ingress-basic

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

giggly-macaw-nginx-ingress-controller LoadBalancer 10.128.202.131 45.79.61.93 80:30746/TCP,443:30869/TCP 2m18s

giggly-macaw-nginx-ingress-default-backend ClusterIP 10.128.115.170 <none> 80/TCP 2m18sHere we can now apply the IP to the values.yaml

statefulset:

enabled: false

replicaCount: 1

# By default deploymentStrategy is set to rollingUpdate with maxSurge of 25% and maxUnavailable of 25% . you can change type to `Recreate` or can uncomment `rollingUpdate` specification and adjust them to your usage.

deploymentStrategy: {}

# rollingUpdate:

# maxSurge: 25%

# maxUnavailable: 25%

# type: RollingUpdate

nexus:

imageName: quay.io/travelaudience/docker-nexus

imageTag: 3.17.0

imagePullPolicy: IfNotPresent

env:

- name: install4jAddVmParams

value: "-Xms1200M -Xmx1200M -XX:MaxDirectMemorySize=2G -XX:+UnlockExperimentalVMOptions -XX:+UseCGroupMemoryLimitForHeap"

# nodeSelector:

# cloud.google.com/gke-nodepool: default-pool

resources: {}

# requests:

## Based on https://support.sonatype.com/hc/en-us/articles/115006448847#mem

## and https://twitter.com/analytically/status/894592422382063616:

## Xms == Xmx

## Xmx <= 4G

## MaxDirectMemory >= 2G

## Xmx + MaxDirectMemory <= RAM * 2/3 (hence the request for 4800Mi)

## MaxRAMFraction=1 is not being set as it would allow the heap

## to use all the available memory.

# cpu: 250m

# memory: 4800Mi

# The ports should only be changed if the nexus image uses a different port

dockerPort: 5003

nexusPort: 8081

service:

type: NodePort

# clusterIP: None

# annotations: {}

## When using LoadBalancer service type, use the following AWS certificate from ACM

## https://aws.amazon.com/documentation/acm/

# service.beta.kubernetes.io/aws-load-balancer-ssl-cert: "arn:aws:acm:eu-west-1:123456789:certificate/abc123-abc123-abc123-abc123"

# service.beta.kubernetes.io/aws-load-balancer-backend-protocol: "https"

# service.beta.kubernetes.io/aws-load-balancer-backend-port: "https"

## When using LoadBalancer service type, whitelist these source IP ranges

## https://kubernetes.io/docs/tasks/access-application-cluster/configure-cloud-provider-firewall/

# loadBalancerSourceRanges:

# - 192.168.1.10/32

# labels: {}

# securityContext:

# fsGroup: 2000

podAnnotations: {}

livenessProbe:

initialDelaySeconds: 30

periodSeconds: 30

failureThreshold: 6

# timeoutSeconds: 10

path: /

readinessProbe:

initialDelaySeconds: 30

periodSeconds: 30

failureThreshold: 6

# timeoutSeconds: 10

path: /

# hostAliases allows the modification of the hosts file inside a container

hostAliases: []

# - ip: "192.168.1.10"

# hostnames:

# - "example.com"

# - "www.example.com"

route:

enabled: false

name: docker

portName: docker

labels:

annotations:

# path: /docker

nexusProxy:

enabled: true

# svcName: proxy-svc

imageName: quay.io/travelaudience/docker-nexus-proxy

imageTag: 2.5.0

imagePullPolicy: IfNotPresent

port: 8080

targetPort: 8080

# labels: {}

env:

nexusDockerHost: 45.79.61.93

nexusHttpHost: 45.79.61.93

enforceHttps: false

cloudIamAuthEnabled: false

## If cloudIamAuthEnabled is set to true uncomment the variables below and remove this line

# clientId: ""

# clientSecret: ""

# organizationId: ""

# redirectUrl: ""

# requiredMembershipVerification: "true"

# secrets:

# keystore: ""

# password: ""

resources: {}

# requests:

# cpu: 100m

# memory: 256Mi

# limits:

# cpu: 200m

# memory: 512Mi

nexusProxyRoute:

enabled: false

labels:

annotations:

# path: /nexus

persistence:

enabled: true

accessMode: ReadWriteOnce

## If defined, storageClass: <storageClass>

## If set to "-", storageClass: "", which disables dynamic provisioning

## If undefined (the default) or set to null, no storageClass spec is

## set, choosing the default provisioner. (gp2 on AWS, standard on

## GKE, AWS & OpenStack)

##

# existingClaim:

# annotations:

# "helm.sh/resource-policy": keep

# storageClass: "-"

storageSize: 8Gi

# If PersistentDisk already exists you can create a PV for it by including the 2 following keypairs.

# pdName: nexus-data-disk

# fsType: ext4

nexusBackup:

enabled: false

imageName: quay.io/travelaudience/docker-nexus-backup

imageTag: 1.5.0

imagePullPolicy: IfNotPresent

env:

targetBucket:

nexusAdminPassword: "admin123"

persistence:

enabled: true

# existingClaim:

# annotations:

# "helm.sh/resource-policy": keep

accessMode: ReadWriteOnce

# See comment above for information on setting the backup storageClass

# storageClass: "-"

storageSize: 8Gi

# If PersistentDisk already exists you can create a PV for it by including the 2 following keypairs.

# pdName: nexus-backup-disk

# fsType: ext4

ingress:

enabled: false

path: /

annotations: {}

# # NOTE: Can't use 'false' due to https://github.com/jetstack/kube-lego/issues/173.

# kubernetes.io/ingress.allow-http: true

# kubernetes.io/ingress.class: gce

# kubernetes.io/ingress.global-static-ip-name: ""

# kubernetes.io/tls-acme: true

tls:

enabled: true

secretName: nexus-tls

# Specify custom rules in addition to or instead of the nexus-proxy rules

rules:

# - host: http://nexus.127.0.0.1.nip.io

# http:

# paths:

# - backend:

# serviceName: additional-svc

# servicePort: 80

tolerations: []

# # Enable configmap and add data in configmap

config:

enabled: false

mountPath: /sonatype-nexus-conf

data:

deployment:

# # Add annotations in deployment to enhance deployment configurations

annotations: {}

# # Add init containers. e.g. to be used to give specific permissions for nexus-data.

# # Add your own init container or uncomment and modify the given example.

initContainers:

# - name: fmp-volume-permission

# image: busybox

# imagePullPolicy: IfNotPresent

# command: ['chown','-R', '200', '/nexus-data']

# volumeMounts:

# - name: nexus-data

# mountPath: /nexus-data

# # Uncomment and modify this to run a command after starting the nexus container.

postStart:

command: # '["/bin/sh", "-c", "ls"]'

additionalContainers:

additionalVolumes:

additionalVolumeMounts:

# # To use an additional secret, set enable to true and add data

secret:

enabled: false

mountPath: /etc/secret-volume

readOnly: true

data:

# # To use an additional service, set enable to true

service:

# name: additional-svc

enabled: false

labels: {}

annotations: {}

ports:

- name: nexus-service

targetPort: 80

port: 80

we can now install with those values:

$ helm install stable/sonatype-nexus -f ~/Downloads/values.yaml -n ingress-basic

NAME: ingress-basic

LAST DEPLOYED: Sat Jan 11 23:13:54 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

ingress-basic-sonatype-nexus 0/1 1 0 1s

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ingress-basic-sonatype-nexus-data Pending linode-block-storage 1s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

ingress-basic-sonatype-nexus-588c66f97c-hd5wv 0/2 Pending 0 1s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-basic-sonatype-nexus NodePort 10.128.64.105 <none> 8080:30375/TCP 1s

NOTES:

1. To access Nexus:

NOTE: It may take a few minutes for the ingress load balancer to become available or the backends to become HEALTHY.

You can watch the status of the backends by running:

`kubectl get ingress -o jsonpath='{.items[*].metadata.annotations.ingress\.kubernetes\.io/backends}'`

To access Nexus you can check:

http://45.79.61.93

2. Login with the following credentials

username: admin

password: admin123

3. Change Your password after the first login

4. Next steps in configuration

Please follow the link below to the README for nexus configuration, usage, backups and DR info:

https://github.com/helm/charts/tree/master/stable/sonatype-nexus#after-installing-the-chart

Now update the ingress_test.yaml (use the service from the above helm install)

$ cat ~/Downloads/ingress_test.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: hello-kubernetes-ingress

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /

backend:

serviceName: ingress-basic-sonatype-nexus

servicePort: 8080

$ kubectl apply -f ~/Downloads/ingress_test.yaml

ingress.extensions/hello-kubernetes-ingress created

This should now expose nexus. we can get the password as we did earlier:

$ kubectl get pods --all-namespaces | grep nexus

default ingress-basic-sonatype-nexus-588c66f97c-hd5wv 2/2 Running 0 16m

$ kubectl exec ingress-basic-sonatype-nexus-588c66f97c-hd5wv cat /nexus-data/admin.password

Defaulting container name to nexus.

Use 'kubectl describe pod/ingress-basic-sonatype-nexus-588c66f97c-hd5wv -n default' to see all of the containers in this pod.

800aef06-425e-411b-bd9b-e7451f5114edNow i'm going to pause here. I tried many ways to login via docker. This included using the same service for a different port, or creating a new service on the port...

$ kubectl get svc ingress-basic-sonatype-nexus-docker -o yaml

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"app":"sonatype-nexus","chart":"sonatype-nexus-1.21.3","fullname":"ingress-basic-sonatype-nexus-docker","heritage":"Tiller","release":"ingress-basic"},"name":"ingress-basic-sonatype-nexus-docker","namespace":"default"},"spec":{"ports":[{"name":"ingress-basic-sonatype-nexus","nodePort":30376,"port":5003,"protocol":"TCP","targetPort":5003}],"selector":{"app":"sonatype-nexus-docker","release":"ingress-basic"},"sessionAffinity":"None","type":"NodePort"},"status":{"loadBalancer":{}}}

creationTimestamp: "2020-01-12T05:43:06Z"

labels:

app: sonatype-nexus

chart: sonatype-nexus-1.21.3

fullname: ingress-basic-sonatype-nexus-docker

heritage: Tiller

release: ingress-basic

name: ingress-basic-sonatype-nexus-docker

namespace: default

resourceVersion: "14111"

selfLink: /api/v1/namespaces/default/services/ingress-basic-sonatype-nexus-docker

uid: 0e64bfb2-16f2-4796-9b78-5966e0f6fe87

spec:

clusterIP: 10.128.193.7

externalTrafficPolicy: Cluster

ports:

- name: ingress-basic-sonatype-nexus

nodePort: 30376

port: 5003

protocol: TCP

targetPort: 5003

selector:

app: sonatype-nexus-docker

release: ingress-basic

sessionAffinity: None

type: NodePort

status:

loadBalancer: {}

$ cat ~/Downloads/ingress_test.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: hello-kubernetes-ingress

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /

backend:

serviceName: ingress-basic-sonatype-nexus-docker

servicePort: 5003but no matter how i did it, i couldn't past login:

$ docker login http://45.79.61.93

Username: admin

Password:

Error response from daemon: login attempt to http://45.79.61.93/v2/ failed with status: 503 Service UnavailableLet’s try AKS

$ az group create --name idj-nexus-oss-rg --location centralus

{

"id": "/subscriptions/d955c0ba-13dc-44cf-a29a-8fed74cbb22d/resourceGroups/idj-nexus-oss-rg",

"location": "centralus",

"managedBy": null,

"name": "idj-nexus-oss-rg",

"properties": {

"provisioningState": "Succeeded"

},

"tags": null,

"type": null

}

now create the AKS instance

builder@DESKTOP-JBA79RT:/mnt/c/WINDOWS/system32$ az aks create \

> --resource-group idj-nexus-oss-rg \

> --name k8stest \

> --generate-ssh-keys \

> --aad-server-app-id f61ad37a-3a16-498b-ad8c-812c1a82d541 \

> --aad-server-app-secret eYxuMc0n6YCm8exy=ulFC-Vv5iZvvi]? \

> --aad-client-app-id dc248705-0b14-4ff2-82c2-5b6a5260c62b \

> --aad-tenant-id 28c575f6-ade1-4838-8e7c-7e6d1ba0eb4a

SSH key files '/home/builder/.ssh/id_rsa' and '/home/builder/.ssh/id_rsa.pub' have been generated under ~/.ssh to allow SSH access to the VM. If using machines without permanent storage like Azure Cloud Shell without an attached file share, back up your keys to a safe location

Finished service principal creation[##################################] 100.0000%Operation failed with status: 'Bad Request'. Details: The credentials in ServicePrincipalProfile were invalid. Please see https://aka.ms/aks-sp-help for more details. (Details: adal: Refresh request failed. Status Code = '400'. Response body: {"error":"unauthorized_client","error_description":"AADSTS700016: Application with identifier '227bd8ea-c5fe-4d5e-9bb5-b420d90137d0' was not found in the directory '28c575f6-ade1-4838-8e7c-7e6d1ba0eb4a'. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You may have sent your authentication request to the wrong tenant.\r\nTrace ID: 3c9881be-a7b5-4151-b204-7c5cae435201\r\nCorrelation ID: 8d528079-032f-45ea-a05f-e1618a116772\r\nTimestamp: 2020-01-08 03:09:03Z","error_codes":[700016],"timestamp":"2020-01-08 03:09:03Z","trace_id":"3c9881be-a7b5-4151-b204-7c5cae435201","correlation_id":"8d528079-032f-45ea-a05f-e1618a116772","error_uri":"https://login.microsoftonline.com/error?code=700016"})

builder@DESKTOP-JBA79RT:/mnt/c/WINDOWS/system32$ az aks create --resource-group idj-nexus-oss-rg --name k8stest --generate-ssh-keys --aad-server-app-id f61ad37a-3a16-498b-ad8c-812c1a82d541 --aad-server-app-secret eYxuMc0n6YCm8exy=ulFC-Vv5iZvvi]? --aad-client-app-id dc248705-0b14-4ff2-82c2-5b6a5260c62b --aad-tenant-id 28c575f6-ade1-4838-8e7c-7e6d1ba0eb4a

{

"aadProfile": {

"clientAppId": "dc248705-0b14-4ff2-82c2-5b6a5260c62b",

"serverAppId": "f61ad37a-3a16-498b-ad8c-812c1a82d541",

"serverAppSecret": null,

"tenantId": "28c575f6-ade1-4838-8e7c-7e6d1ba0eb4a"

},

"addonProfiles": null,

"agentPoolProfiles": [

{

"availabilityZones": null,

"count": 3,

"enableAutoScaling": null,

"maxCount": null,

"maxPods": 110,

"minCount": null,

"name": "nodepool1",

"orchestratorVersion": "1.14.8",

"osDiskSizeGb": 100,

"osType": "Linux",

"provisioningState": "Succeeded",

"type": "AvailabilitySet",

"vmSize": "Standard_DS2_v2",

"vnetSubnetId": null

}

],

"apiServerAuthorizedIpRanges": null,

"dnsPrefix": "k8stest-idj-nexus-oss-rg-d955c0",

"enablePodSecurityPolicy": null,

"enableRbac": true,

"fqdn": "k8stest-idj-nexus-oss-rg-d955c0-3d4c1461.hcp.centralus.azmk8s.io",

"id": "/subscriptions/d955c0ba-13dc-44cf-a29a-8fed74cbb22d/resourcegroups/idj-nexus-oss-rg/providers/Microsoft.ContainerService/managedClusters/k8stest",

"identity": null,

"kubernetesVersion": "1.14.8",

"linuxProfile": {

"adminUsername": "azureuser",

"ssh": {

"publicKeys": [

{

"keyData": "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQDLzysqDWJpJ15Sho/NYk3ZHzC36LHw5zE1gyxhEQCH53BSbgA39XVXs/8TUjrkoVi6/YqlliYVg7TMQSjG51d3bLuelMh7IGIPGqSnT5rQe4x9ugdi+rLeFgP8+rf9aGYwkKMd98Aj2i847/deNLFApDoTtI54obZDuhu2ySW23BiQqV3lXuIe/0WwKpG0MFMoXU9JrygPXyNKbgJHR7pLR9U8WVLMF51fmUEeKb5johgrKeIrRMKBtiijaJO8NP6ULuOcQ+Z0VpUUbZZpIqeo8wqdMbDHkyFqh5a5Z1qrY5uDSpqcElqR5SiVesumUfMTBxz83/oprz23e747h8rP"

}

]

}

},

"location": "centralus",

"maxAgentPools": 1,

"name": "k8stest",

"networkProfile": {

"dnsServiceIp": "10.0.0.10",

"dockerBridgeCidr": "172.17.0.1/16",

"loadBalancerSku": "Basic",

"networkPlugin": "kubenet",

"networkPolicy": null,

"podCidr": "10.244.0.0/16",

"serviceCidr": "10.0.0.0/16"

},

"nodeResourceGroup": "MC_idj-nexus-oss-rg_k8stest_centralus",

"provisioningState": "Succeeded",

"resourceGroup": "idj-nexus-oss-rg",

"servicePrincipalProfile": {

"clientId": "227bd8ea-c5fe-4d5e-9bb5-b420d90137d0",

"secret": null

},

"tags": null,

"type": "Microsoft.ContainerService/ManagedClusters",

"windowsProfile": null

}

Now login

builder@DESKTOP-JBA79RT:/mnt/c/WINDOWS/system32$ az aks list -o table

Name Location ResourceGroup KubernetesVersion ProvisioningState Fqdn

------- ---------- ---------------- ------------------- ------------------- ----------------------------------------------------------------

k8stest centralus idj-nexus-oss-rg 1.14.8 Succeeded k8stest-idj-nexus-oss-rg-d955c0-3d4c1461.hcp.centralus.azmk8s.io

builder@DESKTOP-JBA79RT:/mnt/c/WINDOWS/system32$ az aks get-credentials -n k8stest -g idj-nexus-oss-rg --admin

/home/builder/.kube/config has permissions "777".

It should be readable and writable only by its owner.

Merged "k8stest-admin" as current context in /home/builder/.kube/config

Now install

builder@DESKTOP-JBA79RT:~/linux-amd64$ ./helm install stable/sonatype-nexus -f values_01.yaml

NAME: modest-whale

LAST DEPLOYED: Tue Jan 7 22:07:58 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

modest-whale-sonatype-nexus 0/1 1 0 1s

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

modest-whale-sonatype-nexus-data Pending default 1s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

modest-whale-sonatype-nexus-68ff9bdd76-74vbk 0/2 Pending 0 0s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

modest-whale-sonatype-nexus NodePort 10.0.212.234 <none> 8080:32764/TCP 1s

NOTES:

1. To access Nexus:

NOTE: It may take a few minutes for the ingress load balancer to become available or the backends to become HEALTHY.

You can watch the status of the backends by running:

`kubectl get ingress -o jsonpath='{.items[*].metadata.annotations.ingress\.kubernetes\.io/backends}'`

To access Nexus you can check:

http://127.0.0.1

2. Login with the following credentials

username: admin

password: admin123

3. Change Your password after the first login

4. Next steps in configuration

Please follow the link below to the README for nexus configuration, usage, backups and DR info:

https://github.com/helm/charts/tree/master/stable/sonatype-nexus#after-installing-the-chart

and get the password:

builder@DESKTOP-JBA79RT:~/linux-amd64$ kubectl exec modest-whale-sonatype-nexus-68ff9bdd76-74vbk cat /nexus-data/admin.password

Defaulting container name to nexus.

Use 'kubectl describe pod/modest-whale-sonatype-nexus-68ff9bdd76-74vbk -n default' to see all of the containers in this pod.

e1c3ce6a-fd68-49b4-bd20-8dc21f9e98fabuilder@DESKTOP-JBA79RT:~/linux-amd64$

And we can this too

builder@DESKTOP-JBA79RT:~/linux-amd64$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/provider/baremetal/service-nodeport.yaml

service/ingress-nginx created

builder@DESKTOP-JBA79RT:~/linux-amd64$ kubectl get ingress --all-namespaces

No resources found.

builder@DESKTOP-JBA79RT:~/linux-amd64$ vi ingress_test.yaml

builder@DESKTOP-JBA79RT:~/linux-amd64$ kubectl apply -f ingress_test.yaml

ingress.extensions/hello-kubernetes-ingress created

builder@DESKTOP-JBA79RT:~/linux-amd64$ kubectl get ingress --all-namespaces

NAMESPACE NAME HOSTS ADDRESS PORTS AGE

default hello-kubernetes-ingress * 80 4m53s

However in testing AKS and LKE, i got stuck on the ports for docker.

I even hand modified the service

apiVersion: v1

kind: Service

metadata:

creationTimestamp: "2020-01-12T05:13:54Z"

labels:

app: sonatype-nexus

chart: sonatype-nexus-1.21.3

fullname: ingress-basic-sonatype-nexus

heritage: Tiller

release: ingress-basic

name: ingress-basic-sonatype-nexus

namespace: default

resourceVersion: "10175"

selfLink: /api/v1/namespaces/default/services/ingress-basic-sonatype-nexus

uid: 710580dd-cb8a-4baf-85d3-fbde20e590d7

spec:

clusterIP: 10.128.64.105

externalTrafficPolicy: Cluster

ports:

- name: ingress-basic-sonatype-nexus-docker

nodePort: 30378

port: 5003

protocol: TCP

targetPort: 5003

- name: ingress-basic-sonatype-nexus

nodePort: 30375

port: 8080

protocol: TCP

targetPort: 8080

selector:

app: sonatype-nexus

release: ingress-basic

sessionAffinity: None

type: NodePort

status:

loadBalancer: {}And that still failed

$ docker login 45.79.61.93

Username: admin

Password:

Error response from daemon: login attempt to http://45.79.61.93/v2/ failed with status: 503 Service Unavailable

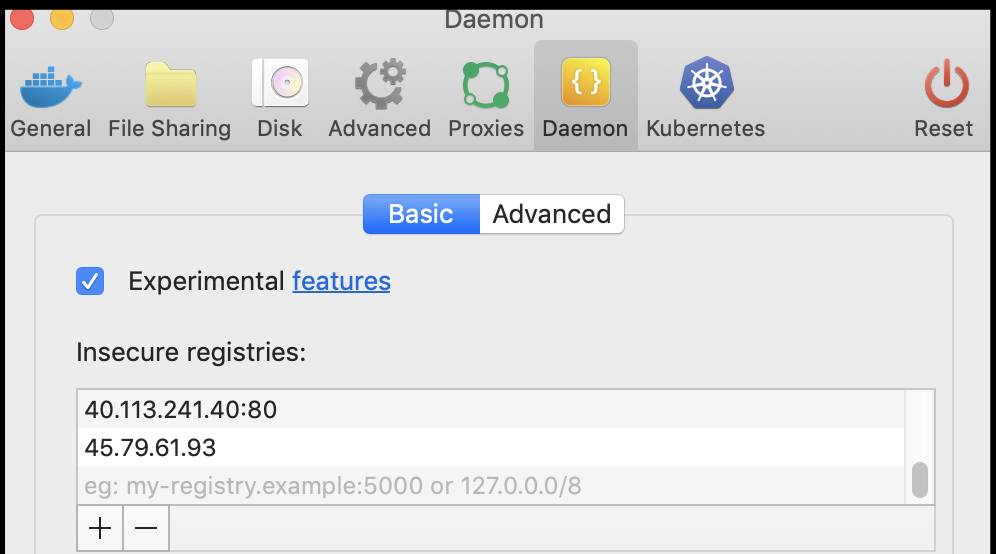

even putting in the exceptions in docker desktop:

Summary

I've spent far more time than normal trying to get this to work. At some point, I need to wrap a post.

My goal was to run a containerized artifact managed suite like Nexus to host containers, hosted or proxied. However, the problem is that Nexus exposes Docker on a port, however the port isn't exposed via the kubernetes config. This works fine locally or on a VM, but on a container it's a bit more challenging.

Nexus may work, but as a helm chart, there appear to be some limitations. I look forward to exploring this further in future posts.